- by Rachael

Early Access is available to all next-gen creators and their fans who have a Meta Quest 2 or Rift S.

Easy content sharing is available in Flipside Studio Early Access where you can create, watch posts, remix content, and more! Welcome to the public early access launch of this new tool! Here’s what’s included:

Visit flipsidexr.com/get-early-access to sign up.

It's back-to-school time, and we know that instructors are getting geared up to provide future innovators with the skills they need to succeed. Are you an educator interested in using Flipside in the classroom? Join our educators' mailing list and let us know.

Thanks to everyone who notified us of bugs!

CLICK HERE TO FILE A BUG REPORT

Thanks to everyone who shared feature requests! We love the ideas!

MAKE A FEATURE REQUEST

We’d love to welcome you to the Flipside Studio Community Discord channel where you can connect with other creators, share your #MadeInFlipside creations, submit feature requests, and share bug reports.

Join the Community

Join the Community

- by Rachael

We love seeing what you do with multipart recording and this update includes improvements to this feature! We’ve added the ability to hide, mute, and delete parts in an existing recording! This means you can modify existing recordings to add, remove, or redo parts. This update makes it easier to build your perfect scene!

Our new Meet and Greet set is perfect for your VR creations. Check out this double feature! We loved using the Meet and Greet sets following the release of these two major blockbusters.

It’s a Barbie-Oppenheimer World! Give these sets a try, share using #MadeInFlipside, and let us know if you’re #TeamBarbie or #TeamOppenheimer!

Comedic carrot wins it all! Congratulations to Bill Eckman, winner of the AI Script creator contest. His video, Can Vegetables Be Funny, made us laugh and was a great showcase of the AI Script creator tool, powered by OpenAI’s ChatGPT.

Thank you to everyone who entered a video in the contest!

Improvements to multipart recording: We’ve added the ability to hide, mute and delete parts in an existing recording.

New sets and characters: We’ve got Meet and Greet sets and a Balloon wall set for you to create with. And an additional 100 avatars will help tell your story!

Bug fixes and improvements:

Read our release notes here.

Thanks to everyone who notified us of bugs!

CLICK HERE TO FILE A BUG REPORT

Thanks to everyone who shared feature requests! We love the ideas!

MAKE A FEATURE REQUEST

We’d love to welcome you to the Flipside Studio Community Discord channel where you can connect with other creators, share your #MadeInFlipside creations, submit feature requests, and share bug reports.

Join the Community

Join the Community

- by Rachael

Do you need inspo developing a script for your #MadeInFlipside creation? The new AI Script Creator (beta) tool is here to help! Describe the premise, how many characters you’re working with, and the content style, and the AI Script Creator will develop a script you can load in the teleprompter!

We know you’ll have a blast!

We want to see how you use the AI Script Creator (beta)! Enter to win a $100 Amazon gift card when you use the AI Script Creator to generate a script and record a piece of content using that script in Flipside Studio.

Details:

We’re excited to see what you create using the AI Script Creator (beta)!

Your favorite VR avatars are now in Flipside Studio! We’ve integrated some of the go-to avatars from the 100 Avatars asset pack for you to create with.

Get your sci-fi on and explore everywhere with the new Command Deck set. New props are also included in this update and they're perfectly paired with this new set!

AI Script Creator (beta): Describe the situation and the style you’re looking for and let the AI Script Creator (beta) develop a script for you. This new tool is powered by OpenAI’s ChatGPT and you’ll find it in the teleprompter in the main menu of Flipside Studio.

New characters, set, and props: Use the uploaded characters from the 100 Avatars asset pack in the Command Deck set, along with new sci-fi props in your creations!

Bug fixes and improvements:

Read our release notes here.

Thanks to everyone who notified us of bugs!

CLICK HERE TO FILE A BUG REPORT

Thanks to everyone who shared feature requests! We love the ideas!

MAKE A FEATURE REQUEST

We’d love to welcome you to the Flipside Studio Community Discord channel where you can connect with other creators, share your #MadeInFlipside creations, submit feature requests, and share bug reports.

Join the Community

Join the Community

- by Rachael

We're back with a new set fit for a king or queen plus, new coloured spot lights, and the ability to create with AI-generated sets (and more!).

Previously we’ve shared how AI can help with creating scripts and voices to assist with your creative process. Now we’ve officially brought AI into Flipside Studio with the new AI set creator (beta)! With this tool you can describe a set, choose an art style, and watch it appear around you. AI-generated sets are 360 panoramic images and are saved to your imported sets so you can use them again and again.

Don’t forget to share with #MadeInFlipside so we can marvel at your creativity!

Thanks to everyone who joined us last weekend for the Flipside Studio Creator Jam! The Creator Jam continues all week and through to Sunday, May 28 so there’s still time to join!

Read all about the Flipside Studio Creator Jam and the first prize here: https://www.flipsidexr.com/creator-jam

The newest set is here! Create like a King or Queen in the new Throne Room set that’s ready for you in the Flipside Studio app!

We love seeing what you #MadeInFlipside! Thanks to @StricklyImmersive for using Flipside Studio for your multi-part series on YouTube! We love the inclusion of so many characters and the creative set you’re using.

AI set creator (beta): Describe a set, choose an art style, and watch it appear around you. AI-generated sets are 360 panoramic images and are saved to your imported sets so you can use them again and again. Find the new AI set creator (beta) in the Imported category of the Sets menu.

Voice input: We’ve added a voice input button on the virtual keyboard so you can input data just by talking.

Coloured spotlights: You’ll find red, yellow, and blue lights added to the Show Tools category.

You'll also find other bug fixes and improvements in this update!

Read our release notes here.

Thanks to everyone who notified us of bugs!

CLICK HERE TO FILE A BUG REPORT

Thanks to everyone who shared feature requests! We love the ideas!

MAKE A FEATURE REQUEST

We’d love to welcome you to the Flipside Studio Community Discord channel where you can connect with other creators, share your #MadeInFlipside creations, submit feature requests, and share bug reports.

Join the Community

Join the Community

- by Rachael

This is our most requested tutorial so we’re sharing it again. You can also find this tutorial in our in-app help menu! Learn how to get your content out of Flipside Studio so you can share it on social media, in presentations, and more!

Don’t forget to use #MadeInFlipside when you share online and tag @flipsidexr so we can give you a shout out! Thank you to Twitter user @7irovoice for sharing - we love seeing what you create!

We’re setting the stage with the newest Flipside Studio update! You’ll find a new Lounge set ready for you in the app! 🎤

ChatGPT is a unique tool to develop scripts and creative ideas! ⌨️

WhatEver Films & Photography used ChatGPT artificial intelligence to write out the entire script and story of “Sense of Adventure.” They used Flipside Studio to turn the script into a video, which they shared on YouTube.

How will you use AI to develop script ideas?

Prop locking: This new feature allows users to lock props, making set dressing much easier.

Camera settings: Your camera speed, movement, and shake settings are now reflected in Flipside Broadcaster.

Upload visuals to use in your slideshow: You can now upload your slideshow photos and videos directly to a project through the Creator Portal!

Read our release notes here.

Thanks to everyone who notified us of bugs!

CLICK HERE TO FILE A BUG REPORT

Thanks to everyone who shared feature requests! We love the ideas!

MAKE A FEATURE REQUEST

We’d love to welcome you to the Flipside Studio Community Discord channel where you can connect with other creators, share your #MadeInFlipside creations, submit feature requests, and share bug reports.

Join the Community

Join the Community

- by Rachael

You’ve made it, now share it! Learn how to get your content out of Flipside Studio so you can share it on social media, in presentations, and more!

New assets are here! With the newest update of Flipside Studio, you’ll find great new characters and a fun set.

Give our new "cute pup" character pack a try. We can’t wait to see what you create with four new cute pups and a dog park set!

The Flipside XR team loves seeing what you #MadeInFlipside! Feel free to use the hashtag and tag @FlipsideXR in your creations!

Thanks David aka Democratize XR for this great idea of using real world locations in Flipside Studio! See how he uses lidar scans to create a set to use in the app!

Looping audio resolved: If you used the spatial audio setting and heard looping audio, the issue has been resolved!

Ability to delete avatars: If you’ve been creating your own Ready Player Me avatars through the Characters menu, you now have the option of deleting any character you no longer need.

Stand-in fix: Did you create a character stand-in, but no character showed up? The issue has been fixed and character stand-ins are working properly.

Read our release notes here.

Thanks to everyone who notified us of bugs!

CLICK HERE TO FILE A BUG REPORT

Thanks to everyone who shared feature requests! We love the ideas!

MAKE A FEATURE REQUEST

We’d love to welcome you to the Flipside Studio Community Discord channel where you can connect with other creators, share your #MadeInFlipside creations, submit feature requests, and share bug reports.

Join the Community

Join the Community

- by Bohdan Hlushko

We’re thrilled to announce Flipside Studio is LIVE in the Meta app store for Meta Quest and Rift/Rift S headsets! 🎉

Content creators can make any room your stage with Flipside Studio!

Imagine and create your story with fun and imaginative show tools including:

🎬 Sets and props

🎥 Professional production tools

🙋♀️ Character customization including @readyplayer.me

🎞 Motion capture and scene layering

👱♀️🤝👦🏿 Collaborate with folks worldwide

Thank you to the thousands of creators who have been with us testing the app. Thank you too to our tireless team for all of their hard work and creativity! 😉 We’d love to stay in touch and hear your ongoing feedback about Flipside Studio! Thanks again for your continued support.

We can’t wait to see what you create! If you’re sharing on social, tag us at @flipsidexr and use #MadeInFlipside so we can share your creativity.

Keep creating!

- by Bohdan Hlushko

We've been cooking up something special for the past six years, and we're excited to finally share it with you. Flipside Studio is almost here, and we're putting the final touches on this amazing virtual production platform.

For those of you who don't know, Flipside Studio is a one-of-a-kind virtual production tool that lets you create and customize your own animated shows in virtual reality that you can share online.

We couldn't have done it without the amazing support of our beta testers. Thank you for your valuable feedback and insights, which helped us fine-tune Flipside Studio to be the best it can be. We couldn't have done it without you.

So, sit tight and get ready for the ride of your life. Flipside Studio is coming, and it's going to be a blast! Stay tuned for more updates, and thanks for being part of our journey.

- by Starling

Stand-up comedian Jordan has found a way to add value to companies and engage workers, while developing his own skill sets, thanks to virtual technology.

We sat down in Flipside Studio as avatars in a virtual setting to interview Jordan to find out how Flipside Studio has helped him move to the next phase of his career and entertain more corporate client audiences where he isn’t limited to location.

“I'm a standup comedian who started doing that (comedy) about nine years ago,” says Jordan.

Jordan isn’t new to utilizing Flipside Studio, as he’s worked on some prior collaborations.

“I started using Flipside when you (Flipside) reached out to me through some mutual friends about getting involved with Flipside in the Bay Area. You were doing a thing down there and we had a link up and worked on a show together; Earth From Up Here. That was a lot of fun. Since then, we've made a couple of other projects together and worked on some creative collaborations a number of times using Flipside.”

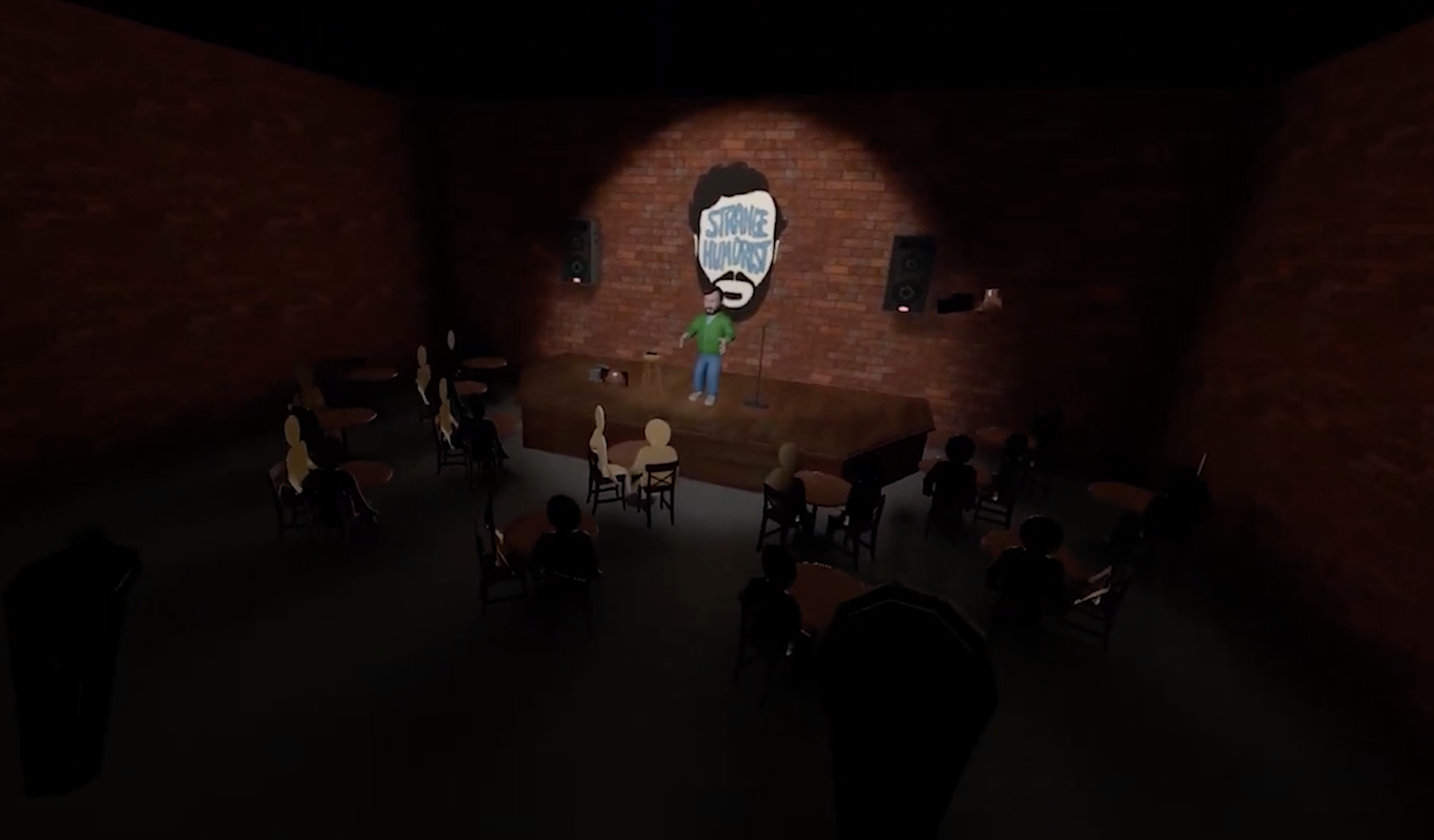

During the pandemic, Jordan witnessed comedians going online. He found a way that he could carve out his own niche in the virtual comedy space. Jordan created a comedy club set design that looks and feels just like a regular club, except it’s online.

“I've been running a little business for myself where I do…remote virtual holiday parties and comedy shows in this set. This is my comedy club that I continue to add to and improve as time goes on.”

“I saw an opportunity to not only put myself into a Zoom meeting with this being my camera's input, you know, the output of a Flipside, but also kind of getting out of self-produced shows and booking myself as a corporate entertainment.”

Earth From Up Here - Watch it on YouTube

“I think a lot of the challenges for me as an immersive performer was embracing being an immersive performer,” says Jordan.

Virtual reality has allowed Jordan to integrate other aspects of his humour into his performances that is more ideal for online performances than in-person setting.

“Some of the stuff that I do on stage in my virtual club, I would be able to do in real life, but it would take so much more planning and infrastructure.”

This has given Jordan an advantage in his field as he provides a unique and layered experience for his corporate clients and their employees. Using Flipside Studio, Jordan is able to live-stream to all of his clients’ employees, reacting and adapting in real-time to their feedback and needs, and providing a show tailored specifically to the client.

“I think a lot of people aren't embracing the fact that you have a lot more to do in here than is readily available to you in a very tough market. I feel like I'm positioned really well to make a new start for comedy in the Metaverse or, you know, whatever we want to call it.”

“This is a tool that I have right at my fingertips as I'm showing people what I want them to see.”

Jordan is more than a comedian. Utilizing Flipside Studio, he’s learned that he’s fully in control of the experience.

“It's essentially a different skill set than just being a comic. It's also being a producer in the same moment. You're editing your own content in real-time and you're the director. You get to choose what the audience sees.”

Utilizing Flipside Studio, Jordan is bringing the joy back to online meetings and bringing people together. He understands why corporate clients are connecting online for bonding opportunities, although he understands it may be a bit unusual for employees.

“Sometimes maybe some people are like, ‘wow, that was really weird. I can't believe we just got paid to watch a cartoon.’”

“Nobody really wants to be in a Zoom meeting anymore, but I feel like this particular tool for me has made it more enjoyable.”

Les interviewing Jordan in Flipside Studio

For Jordan, there are a lot of benefits that allow him to deliver a show for his clients that creates an elevated experience.

“The biggest thing that sets everything apart is the production value. I mean, there's so much that you can do as a single entity where, you know, if you wanted to film us a sketch and in Flipside, you could do it by yourself. You could record all the parts by yourself and have a motion capture recording of you doing one character, leaving a pause for a potential other line and step in is that person. You could do a full-blown production with a really quality end product by yourself.”

This is just the beginning for Jordan.

“I'm starting to see so many opportunities for the future of what this could be, and it's really an exciting time for me.”

“I can explain myself as a funny person much quicker with all of these tools. I get to pack more of a punch with all these tools, and that's why I love Flipside so much.”

Jordan performing standup live using Flipside Studio

While Jordan has a lot of experience utilizing Flipside XR tools for past productions including Earth From Up Here, he’s really found his niche utilizing Flipside Studio to create, host, and produce his interactive, virtual stand-up comedy shows. Offering his comedic services to corporate clients who are looking to engage their employees, Jordan delivers value to companies and joy to employees through his live streams, regardless of how spread-out a company’s workforce is.

For Jordan, his business is expanding and his needs are simple:

“The truth is I'm making more money than I ever have in entertainment, and I don't have to live anywhere. As long as the Wi-Fi is good, home is where the Wi-Fi is good.”

Want to start making your own mocap animation? Click here to learn more about Flipside Studio.

- by Starling

“This is one of the greatest things I’ve ever done, like I’m a live cartoon right now . . . You have to understand how weird it is that I’ve done more stand-up as an avatar than as a real person [in the last year].”

Rodney Ramsey has been doing comedy for 20 years —touring, comedy clubs, festivals. All of those things went away when COVID-19 arrived. Comedians had to take their acts to the virtual space, with most either doing Zoom shows or TikTok videos. Rodney wanted to take a different approach to bring that live/televised comedy show vibe back into the mix, and as someone with VR and production skills, he knew Flipside Studio was the vehicle to do it.

We chatted with Rodney about how hosting comedy in VR has taken his career to new places, making him busier than ever before.

“I was always kind of the class clown and during college, I was bored. Then I did stand-up and it was like, wow, I've never had that much fun. That was it for me,” says Rodney. He started out in Montreal, and after exploring other cities while touring, decided to stay there to build his career.

“All the artists in my industry, they either go to New York or L.A.” Rodney says. “I'm in this weird, cool artistic thing where everything is supposed to be creative, yet everybody does it the exact same way, which never made any sense. So I said, ‘I'm going to see if I can do this from Montreal.’ And it's been going great.”

Rodney has always taken an experimental approach to comedy, creating different productions to see what lands with his audience. His first production was called The Drunken Show where both the performers and the audience would get drunk (and maybe a little high) and just see what would happen.

“We still do it, it’s insane.”

He normally averaged around five productions a year before, but since using Flipside Studio and taking his shows into the virtual space, he’s accelerated his production level to the point where he’s done 60 shows this year already.

“It took me a while to get here, you know what I mean? It's not easy. You really got to either have the knowledge or really want it.”

Incorporating real-time VR mocap animation into Rodney’s act didn’t happen overnight. Luckily, being a stand-up comedian developed a lot of the skills he needed for production.

“Stand-up means that you have the tools to do a bunch of things . . . it means you can write, so I write. It means you can act, so I act. It means you can direct, so I try to direct stuff. And you're always editing.”

Those skills, combined with a love for tech, specifically VR, and the COVID lockdown situation led him to create The Unknown Comedy Club with co-founder Daniel Woodrow.

“What makes this thing special is the production value . . . people need to feel like it’s television.”

Rodney hosts The Unknown Comedy Club shows as a cartoon avatar and delivers his stand-up while operating the camera in real time.

“The cartoon looks good. It's super solid. And then I'm like switching cameras real time while telling jokes, man. I'm also morphing into different characters.”

Rodney is always surprised how quickly people get comfortable with the avatar and don’t even question it.

“I will be sitting at my desk [as an avatar] and I'll pick up coffee and start drinking the coffee. Nobody says anything ever, until I point it out. They're there with you, you know?”

Being into tech, Rodney had the idea to merge VR and stand-up comedy and tried several apps before discovering Flipside. What made Flipside Studio work for him was the real-time mocap animation capabilities, along with the integration with Zoom. It’s now gotten to the point where some people come to the shows just for the avatar.

“They're coming to see the avatars, you know what I mean? They're like, yo, this guy over here, he's doing a show on the moon. There's a supernova happening in the back. You know, it ties it to the punch line. It's crazy.”

Although most of the other comedians performing during the show aren’t performing as avatars—they perform in front of a green screen so Rodney is able to match the backgrounds, the whole production feels a lot more like watching live television than watching a standard Zoom call.

Doing stand-up comedy using VR mocap animation has made Rodney’s brand of comedy into a niche he occupies with very little competition, but his goal is to bring more comedians into VR.

“I'm looking at it like the avatar show. That's all I want. I want to be able to have a bunch of people do it. If I can collab with a bunch of people who are avatars from wherever they are, that is insane to me.”

“We did our first show in January and then we're like, oh my God, that was amazing. The comics were happy. We were happy. The crowd is happy. And when I'm in there, I feel like I'm on stage. I feel like I'm performing in front of a crowd, even though they're in Zoom boxes, like I'm on my stage. I have them in front of me—boom, showtime.”

The next week they did another show, and so on, until they had the hang of the format and wanted to do more with it. Rodney and his co-creator Daniel Woodrow had been doing a comedy tour called the Underground Comedy Railroad, an all black comedy tour, for the last nine years. It had always been in person before, but if they were going to do it this year it would have to be virtual. They had three weeks to pull it together.

“We did a five show tour with Flipside. This is like a huge thing for us because before that we weren’t a club . . . We made more money that weekend, virtually, than we had in the nine years that we've been doing the show, leaving our house.”

With all the comedy clubs shut down during the pandemic, The Unknown Comedy Club has been a way to keep the shows going and not only get paid, but also pay other comedians as well, and Just for Laughs took notice. Rodney is performing more shows at the internationally acclaimed comedy festival this year than ever before.

“Flipside plus Zoom plus a few other things, you know what I mean. Like yeah. Magic.”

Find out when the next Unknown Comedy Club show happens and get your tickets!

Catch Rodney’s act at Just for Laughs and watch out for Rodney's JFL Originals comedy album to be released this fall.

Want to start making your own mocap animation? Click here to learn more about Flipside Studio.